We now have agents embedded across all of our microservices. A rapidly increasing amount of tailor-made agents, skills, and commands. They’re not sitting on the side as optional helpers—they’re woven into every part of our development workflow. Log reviews, code reviews, merges, deployments, monitoring, alerts. All of it.

The team ships with a level of confidence we didn’t have before. That’s the real shift.

How This Works For Us

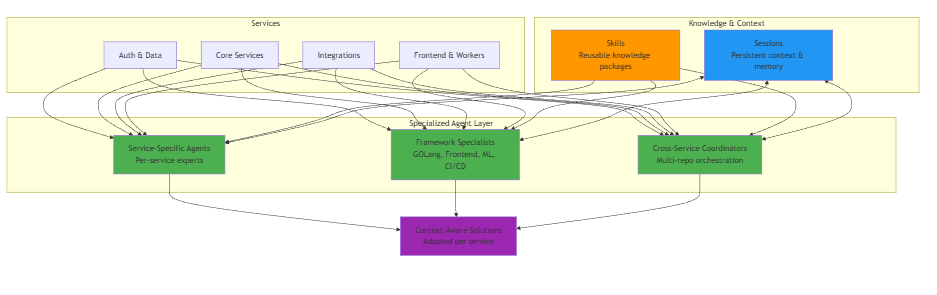

We built specialists, not generalists. We have agents that are experts per service, they understand our intricacies, our infrastructure, and they adapt their solutions and involvement accordingly.

These aren’t generic AI assistants giving generic advice. They know our stack, our patterns, our history. They know why we made certain architectural decisions three months ago because they’re just as much in the loop as our team members.

What We Mean by Agents, Skills, and Commands

Initially, as most of the space, we started off by assuming we needed an agent for everything. “Let’s have an agent for A, B and C”, and soon realized we’ve created a bloat of agents, where in fact most of them shouldn’t be agents at all.

Agents are intended to handle complex, multistep tasks autonomously – things like coordinating deployments across services or analysing security vulnerabilities. They make decisions, they collaborate with other agents, they adapt to an application and they perform.

Skills turned out to be just as important. They’re packages of knowledge about our specific systems. “What are our API conventions?” “When do we use which caching strategy?” Skills get loaded when needed, and any agent can use them. This means we’re not duplicating knowledge across dozens of agents. My favourite thing about them, is that they’re predictable scripts, and inside our autonomous framework, they allow us to still control the workflow.

Commands (now merged with skills) are the simple, repeatable actions. Most of what we thought were “agents” became commands – create a merge request, run benchmarks, update documentation. They’re fast, predictable, and composable.

The Architecture of Our Agentic Framework

The architecture matters because it scales. Team members can create agents easily. Skills capture institutional knowledge. Agents orchestrate complex workflows. Each serves its purpose. Within the limitations of its service.

The architecture uses what’s called hooks, checkpoints, sessions, and consensus in general.

Hooks are just triggers – when you commit code, when a deployment finishes, when Datadog fires an alert, when a specific action is performed. Agents activate automatically at these moments.

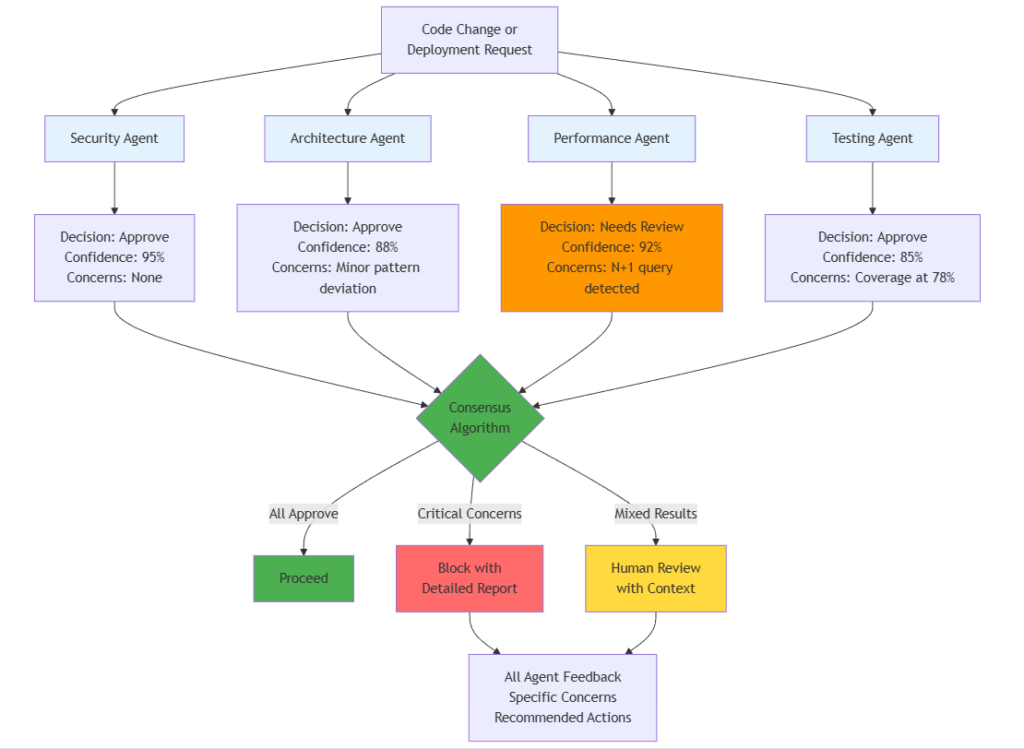

Consensus is probably the most important piece. For critical decisions – deployments, architecture changes, security – multiple agents review independently. Security agent, architecture agent, performance agent. They each vote with a confidence score. If there’s disagreement, we see exactly what each agent is worried about and why.

How It Actually Orchestrates: Workflows as Code

The piece that ties everything together is workflow orchestration, and we made a specific choice: workflows are defined in JSON, not code.

Here’s a simplified example of what a deployment workflow looks like:

This means we can modify workflows without touching application code. We version them like code, we review changes, but the barrier to improving the system is much lower.

The Moment We Realized Specialization Was the Key

We spent the first month building general-purpose agents. “Code reviewer.” “Deployment agent.” “Documentation agent.” Generic names, generic capabilities.

The problem showed up gradually. Generic agents would make suggestions that worked in theory but didn’t fit our specific services. They’d lose context as services evolved—a pattern that was valid last month might not be valid now after we’ve modified a layer.

Generic agents couldn’t track the changes and evolution of each service. They’d reference outdated patterns, suggest approaches we’d moved away from, miss the nuances of why we made certain architectural choices.

So we rebuilt. Not one agent, but a range of specialists for each service. Each one trained on that service’s history, patterns, and evolution.

The difference was immediate. Accuracy went up, false positives dropped, and the team started trusting the suggestions. Generic AI is helpful. AI that knows your architecture – and tracks how it changes – is transformative.

We were additionally pleasantly surprised by the role that agents took in our onboarding processes and overall team spirit. New devs productive in days, not weeks, and the team quickly stopped being sceptical once they saw everything in place and working.

Zynap Inside AI is an ongoing series where our team shares what we’re actually building and learning. If you missed the earlier chapters, start with Chapter 1: The Agentic Wave and Chapter 2: How AI Agents Transformed My Product Workflows.